🌊 Systems of Action

Where power moves when AI becomes cheap.

👋 I’m Ivan. I write about venture capital waves.

This week’s sponsor is LaurenAI by Flippa.

LaurenAI helps you buy online businesses. Tell LaurenAI what you want (SaaS, app, ecom, content site, YouTube + budget). It returns a target list - including businesses not listed for sale, and lets you email owners from one place:

Set your criteria in 60 seconds

Get matched with businesses within your budget

Send personalised messages that actually get replies

Hello there!

Here’s the question I’ve been geeking out about:

When intelligence becomes abundant, where does durable power move next?

The answer might be Systems of Action.

Lets take a look at where capital is flowing to help answer this:

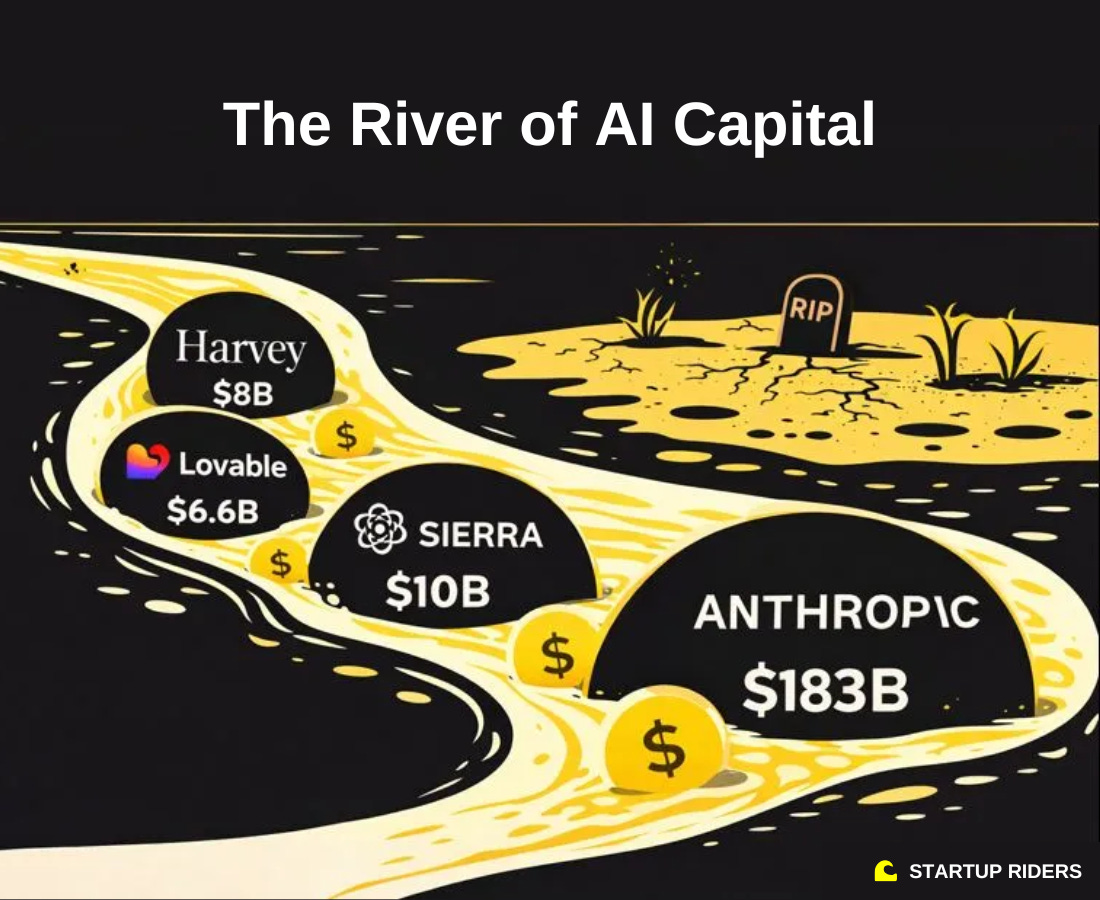

1. The “river of AI capital” is huge, but very concentrated in a handful of companies.

The details:

Capital is showing up all at once (kingmaking), and only in a handful of “the chosen one” companies.

When a company is perceived as “the winner in a category” a virtuous loop forms:

Faster rounds → higher prices → better talent → better product → better customers → narrative validation → VC FOMO → easier to raise the next round.

Saam Motamedi from Greylock described this as getting into a “river of capital”. Once you’re in the river everything downstream becomes easier(ish). But if you miss it, even strong companies can find themselves swimming upstream / ignored.

This also connects to what we discussed recently under the idea of AI Dutch disease, where certain sectors, geographies, and companies are being massively overfunded, while others are fighting for oxygen.

So what:

At this point in the AI hype-cycle I’m seeing a lot of investors shifting from:

“Is this a good company?” → “does this company make it into the river early enough?”.

Purely from an investor psychology perspective (not saying this is positive at all), fundamentals still matter, but momentum matters more than most people like to admit.

On the other hand, don’t let this “king-making” narrative discourage you because:

As with all cycles, the river (or music) will eventually stop and

Many of the most successful companies ever were built in the shadow and/or outside “hyped” narratives at the time (i.e. Nvidia).

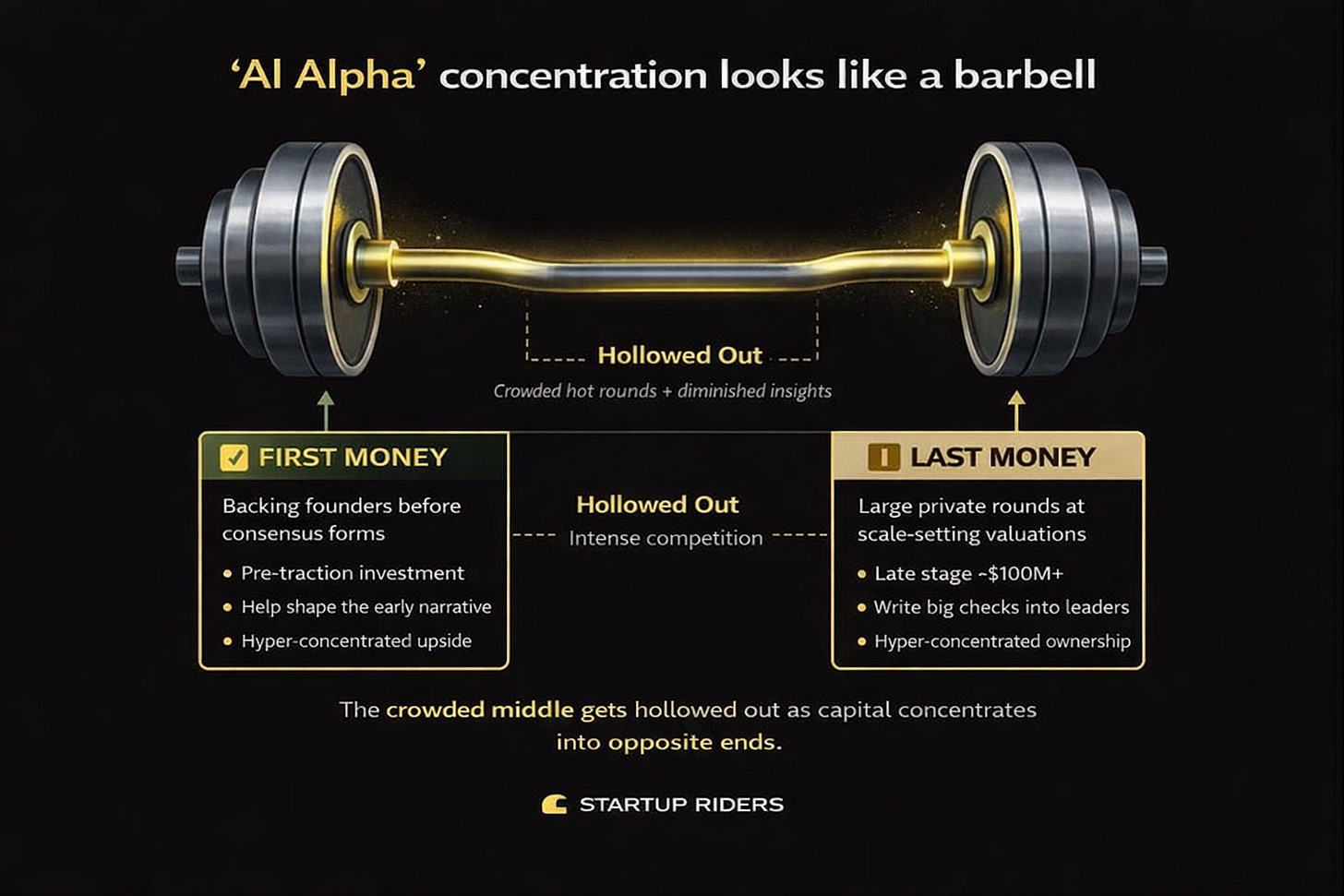

2. AI investing returns look like a barbell: gains sit at the start and the end (mostly unrealised).

The details:

Despite the explosion in AI deal activity, returns (mostly on paper) are becoming more concentrated. Saam from Greylock frames today’s opportunity set as a barbell:

One end is first money: backing founders before there is traction, consensus, or a clear narrative. When this works, you don’t just invest in a company you help create the conditions for it to later enter the “capital river”.

The other end is last money: large private rounds where very few investors can write the check, and alpha comes from price-setting and ownership rather than discovery.

What seems to be hollowed out (and hyper-competitive) is the middle. Seed and early Series A rounds where traction exists, competition is super intense, and pricing increasingly reflects consensus rather than insight.

So what:

This is not a startup quality problem. Its a VC crowding problem where too many funds (mostly undifferentiated) are chasing mostly consensus bets in the middle of that barbell. If you’re building in the middle you can expect noise, price pressure, and probably less conviction than in other points of the hype-cycle.

3. After models and infra, vertical AI grows fast by replacing labor.

The details:

Vertical AI companies are scaling extremely fast, especially in professions built on expensive human labor and strong LLM–market fit (text-heavy work, as discussed in the Agentic Revolution).

This also reflects a shift in where AI investment has gone. The first wave flowed into models and infrastructure. What’s growing fastest now is the application layer where intelligence is turned into direct labor replacement and immediate ROI.

Legal AI is a good example (i.e. Harvey in the US, Legora in Europe). These systems replace large chunks of junior legal work at a fraction of the cost creating immediate and often pretty undeniable ROI.

I recently spoke with a lawyer friend of mine, and it was one of the clearest conversations on product–market fit I’ve had where she was telling me that if her company removed the tool her hours of work would expand dramatically (and she’d be extremely unhappy about that).

This pattern repeats across customer support, coding, content, sales prep, slide creation, transcription, and parts of medicine as we’ve been discussing here.

Wherever LLMs are unusually good at the underlying work and the human cost base is high, vertical AI pulls demand forward very aggressively, which explains the growth.

But, this is unlikely to be the end state and it is interesting to think about what happens when competition moves in (or have multiple “chosen ones” in a river of AI).

Once multiple comparable systems exist, models converge, and switching costs remain low, competition will likely push prices down. The surplus that initially accrues to the vendor starts flowing to the customer instead.

There may be real moats here like workflow depth, firm-specific knowledge, accumulated documents, cultural context etc. But history suggests these moats tend to be narrower than expected.

We’ve seen this before i.e. cloud infrastructure followed the same arc with huge early margins, followed by price pressure as capabilities converged.

AI accelerates this dynamic because once multiple “Harveys” exist, intelligence stops being the differentiator and the fight shifts to price, bundling, and distribution.

Much of the value created shows up as cheaper services and higher output per professional, not necessarily higher vendor margins.

So what:

Vertical AI monetises intelligence by replacing humans, but that same dynamic makes long-term pricing power potentially more fragile once intelligence itself becomes abundant.

Which leads to the deeper very interesting question: if intelligence is no longer scarce, where does durable power move next?

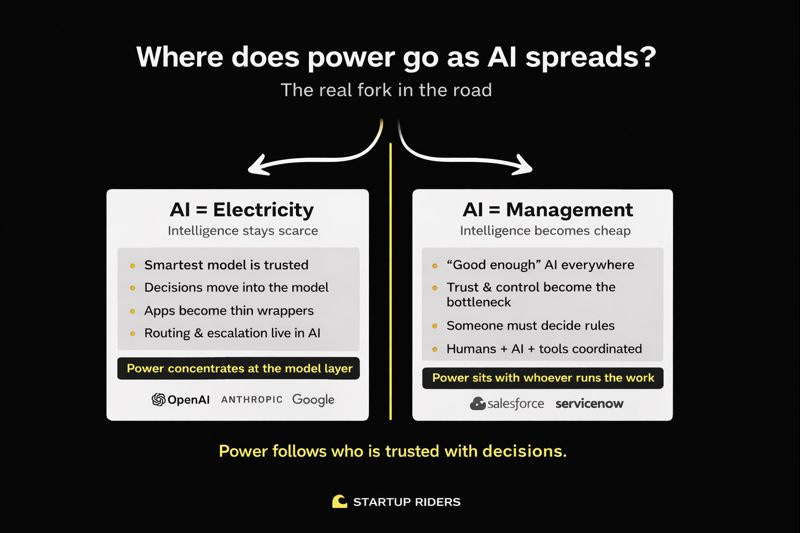

4. Where AI power goes depends on one thing: does intelligence stay scarce or become cheap?

The details:

At a high level, the entire AI market’s direction comes down to one question:

Does economically useful intelligence remain scarce, or does it become cheap and widely available?

By “intelligence” here I don’t mean understanding or judgment. I mean the new kind of machine intelligence we have today where systems that are extremely good at pattern matching, recall, and fluent output, but have no taste, no goals, and no real understanding of what matters.

As Naval puts it, AI predicts text (it doesn’t reason like humans). It kills memorization, lowers the cost of trying things, and acts as a force multiplier for people with good judgment BUT it does not replace judgment itself.

This matters because power flows to whoever is trusted with decisions (trust comes from proven good judgement), not just whoever provides the technology.

In my mind there are really two competing theses about where AI power ends up:

Thesis 1: Intelligence as infrastructure (“AI = Electricity”)

This is the foundation model thesis.

Think OpenAI, Anthropic, Google, Meta.

If intelligence stays scarce and trusted:

building and running the best models remains expensive (compute, energy, safety)

top models keep pulling ahead through scale and feedback loops

companies increasingly defer decisions to the smartest system available

In this world:

AI behaves like a utility (similar to cloud or electricity)

apps become thin wrappers

routing, prioritisation, and escalation happen inside the model

value concentrates at the model layer

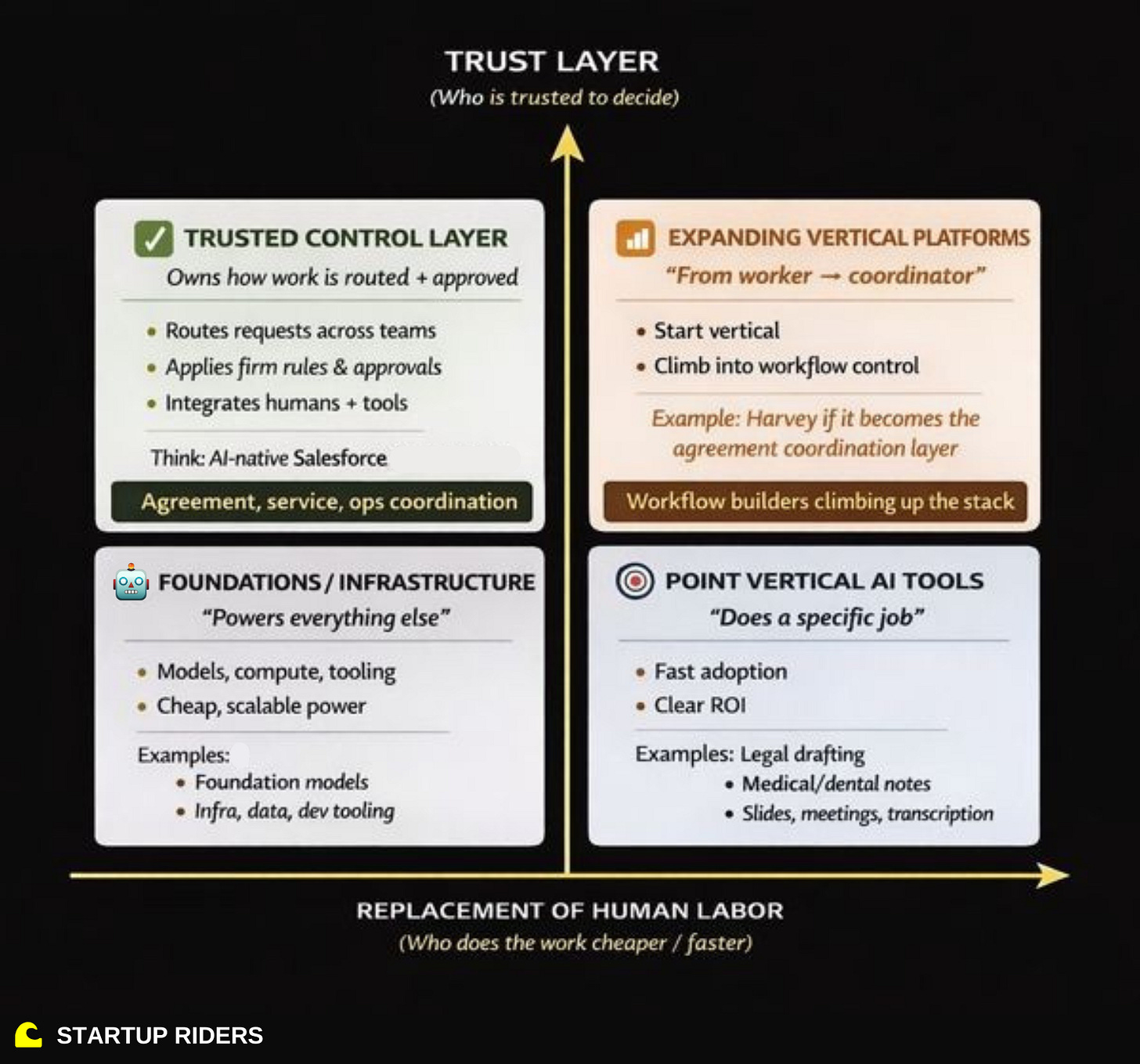

Thesis 2: Intelligence as coordination (“AI runs work”)

This is the systems-of-action thesis.

If intelligence becomes cheap and widely available:

“good enough” AI is everywhere

the bottleneck is no longer having intelligence

The hard problems become:

who is allowed to decide

under which rules

with which approvals

and who is accountable when something breaks

Those are coordination problems, not intelligence problems. Think of coordination as deciding who does what, in what order, under which rules, and who is responsible if it goes wrong. The intelligence is not choosing the goal but rather helping move work through a system of approvals, handoffs, and constraints without humans chasing each other. In this world:

power shifts to systems that control workflows, rules, and decisions

intelligence is a commodity

whoever runs the work wins

This is similar to how Salesforce didn’t win by building better databases but by deciding how sales teams track leads, approve deals, forecast revenue, etc.

So what:

As intelligence becomes cheap, judgment does not. Deciding what matters, when to act, and who is accountable still sits with humans. This is why models, vertical tools, and incumbents will probably (?) start racing to move up into “coordination”.